C. J. Díaz Baso1,2 and A. Asensio Ramos1,2

1. Instituto de Astrofísica de Canarias, Calle Vía Láctea, 38205 La Laguna, Tenerife, Spain

2. Departamento de Astrofísica, Universidad de La Laguna, 38206 La Laguna, Tenerife, Spain

The Helioseismic and Magnetic Imager (HMI) is a space-borne observatory that deploys full-disk images (plus magnetograms and dopplergrams) of the Sun every 45 s (or every 720 s for a better signal-to-noise ratio) with a spatial resolution of ~1.0″ and a sampling of ~0.5″/pix. In spite of the enormous advantage of having such a synoptic space telescope without the problematic Earth’s atmosphere, the spatial resolution is not enough to track many of the small-scale solar structures of interest. One would desirably prefer images with a better spatial resolution that compensates the telescope PSF, and for that we develop a new method to simultaneously deconvolve and super-resolve HMI images and magnetograms.

This “super-resolution + deconvolution” problem is extremely ill-posed, even worse than the usual deconvolution of correcting the effect of the PSF. A multiplicity (potentially an infinite number) of solutions exists. Despite its difficulty, deep learning on single-image deconvolution and super-resolution has been recently applied with great success in natural images. Moreover, given the low variability of solar images, this method applied to solar images gives much better (and robust) results.

The method, which we term Enhance, is based on two deep Convolutional Neural Networks (CNN) that input patches of HMI observations and deconvolve and super-resolve by a factor of 2 the HMI continuum images and magnetograms. This type of neural network, in contrast to the widely used Fully Connected Network in which every input is connected to every neuron (with a single weight), carries out the convolution of the input with a certain kernel of weights. As the same weights are shared across the whole input, it drastically reduces the number of unknowns, and the features can be detected in an image irrespectively of where they are located[1].

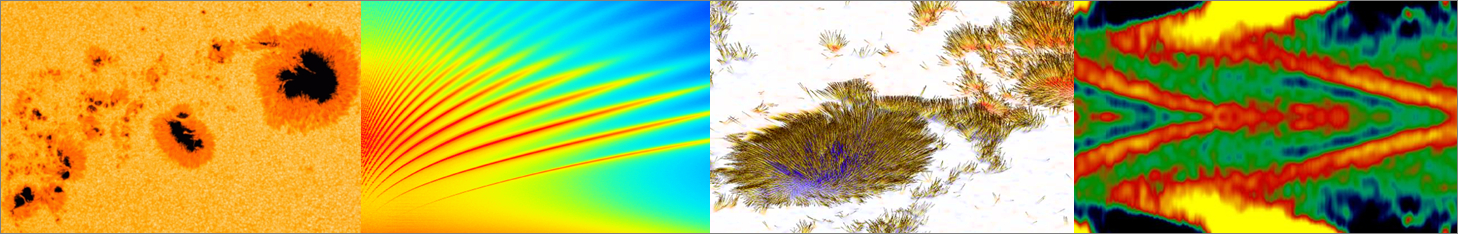

Figure 1| Comparison of the output of the neural network with the synthetic validation set for the continuum images.

The training of these networks is carried out by iteratively updating the weights and minimizing a loss function defined as the squared difference between the output of the network and the desired output. To generate a suitable training set we have used synthetic continuum images and synthetic magnetograms from the simulation of the formation of a solar active region described by Cheung et al.[2]. The synthetic images (and magnetograms) are then treated to simulate a real HMI observation. For that, all the frames of the simulation are convolved with the HMI PSF[3] and resampled to 0.5″/pixel. Then, we randomly extract 50000 patches, which constitute the input patches of the training set and a smaller subset of 5000 patches which act as a validation set to check if there is overfitting. The targets of the training set are obtained similarly but convolving with the Airy function of a telescope twice the diameter of HMI (28 cm).

Figure 2| From left to right: the original HMI images, the output of the neural network, and the degraded Hinode image.

When the training process is finished, we check the results with some of the patches from the validation set. We find that we are able to greatly improve the fine structure, especially in the penumbra and the protrusions in the umbra (see Fig. 1). After that, the trained networks are applied to real HMI data. In order to validate the output of our neural network we have selected observations of the Broadband Filter Instrument from the Solar Optical Telescope onboard Hinode, and degraded them with the diffraction PSF of a 28 cm diameter telescope. This test shows very good consistency between each pair of images (see Fig. 2). It is clear that Enhance produces small-scale structures (such as small weak umbral dots) that are evident in Hinode data but completely smeared out in HMI.

Figure 3| This figure show an example of our neural network applied to the intensity (left) and magnetogram (right) for the same region. The upper half shows the HMI original image, while the lower half after applying the neural network.

We also note that the deconvolution of magnetograms is even more important due to the strong impact of stray light. Depending on the structure, Enhance recovers magnetic fields up to two times stronger, as signal smeared in the surrounding quiet Sun is put back on its original location (see Fig. 3). We also did a comparison with a standard Richardson-Lucy (RL) algorithm demonstrating that our network is orders of magnitude faster than RL while it does not create noisy artifacts and the estimation of the magnetic field is as robust as a RL method.

The code is open sourced for the community, providing the methods to apply the trained networks used in this work to HMI images or re-train them using new data: https://github.com/cdiazbas/enhance.

References

[1] Asensio Ramos, A., Requerey, I. S., & Vitas, N. 2017, A&A, 604, A11

[2] Cheung, M. C. M., Rempel, M., Title, A. M., & Schüssler, M. 2010, ApJ, 720, 233

[3] Wachter, R., Schou, J., Rabello-Soares, M. C., et al. 2012, Sol. Phys., 275, 261

Every weekend i used to visit this website, as i wish for enjoyment, for the reason that this this web page conations actually nice funny information too.